arXiv preprint, 2026

A next-scale token map prediction framework with a multi-scale skeletal-temporal hierarchy for human motion generation, enabling zero-shot motion editing.

I am a final-year PhD candidate in the Electrical and Computer Engineering at Seoul National University (SNU), working in the 3D Vision Lab advised by Prof. Young Min Kim. Previously, I was a Research Scientist Intern at Snap Research.

My research focuses on generative motion modeling for Embodied Motion Intelligence, with an emphasis on controllable and robust motion generation under sparse, noisy, and causal conditions, alongside text-driven motion synthesis.

arXiv preprint, 2026

A next-scale token map prediction framework with a multi-scale skeletal-temporal hierarchy for human motion generation, enabling zero-shot motion editing.

arXiv preprint, 2026

An online causal framework for full-body motion reconstruction from sparse and noisy egocentric observations using diffusion forcing.

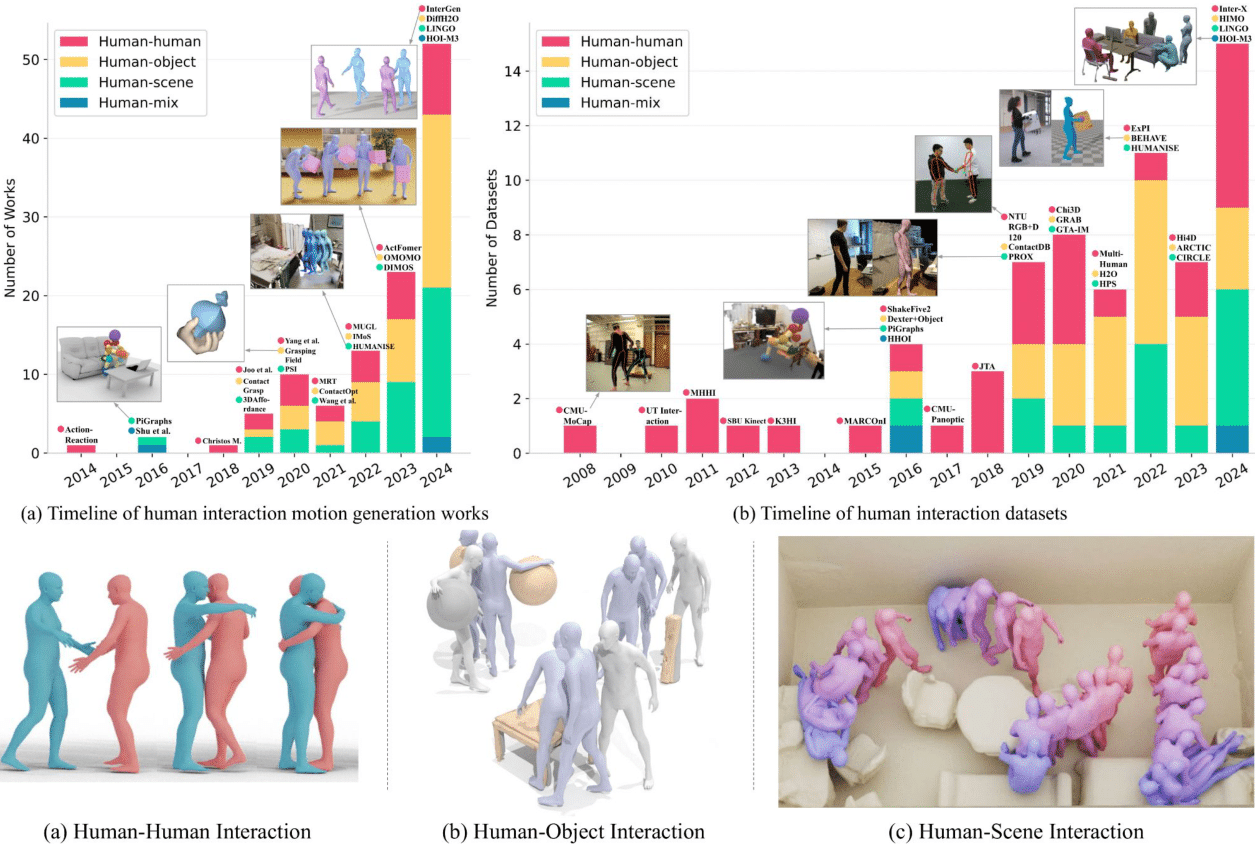

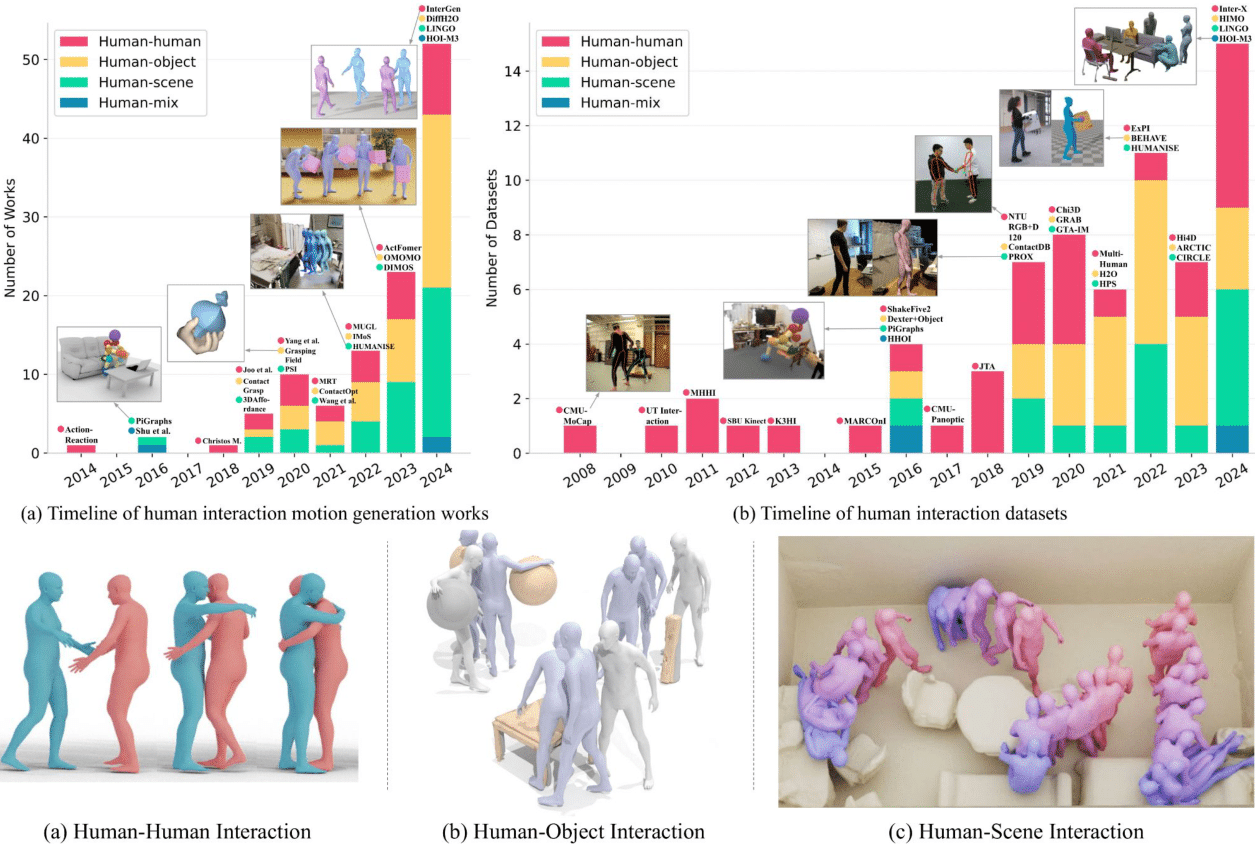

IJCV, 2026

A review of recent advances in human interaction motion generation: human-human, human-object, human-scene, and human-mix interactions.

NeurIPS, 2025

A large-scale text-motion dataset featuring high-quality motion capture and expressive textual annotations, alongside a masked modeling framework with multi-scale tokens.

ICCV, 2025 Highlight

Models human-scene interaction as in-betweening, while remaining robust to inaccurate keyframes and supporting practical applications such as video-based HSI reconstruction.

ICCV, 2025

A controllable motion synthesis pipeline for high-quality motion generation from sparse control signals, including time-agnostic motion control without explicit timing signals.

ICCV, 2025

A sparse keyframe-based motion diffusion model that better captures text prompts and improves overall motion quality.

ICCV, 2025

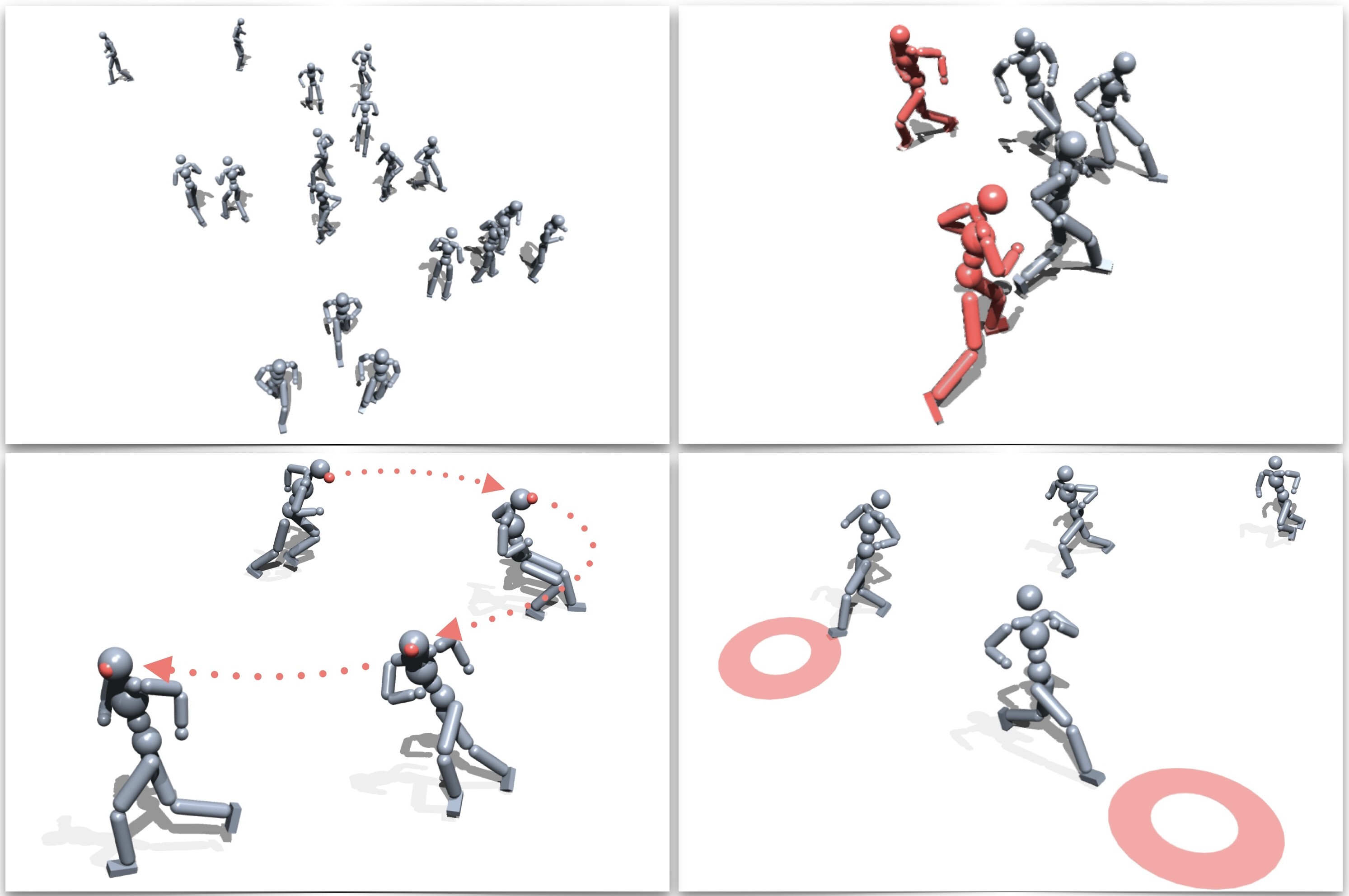

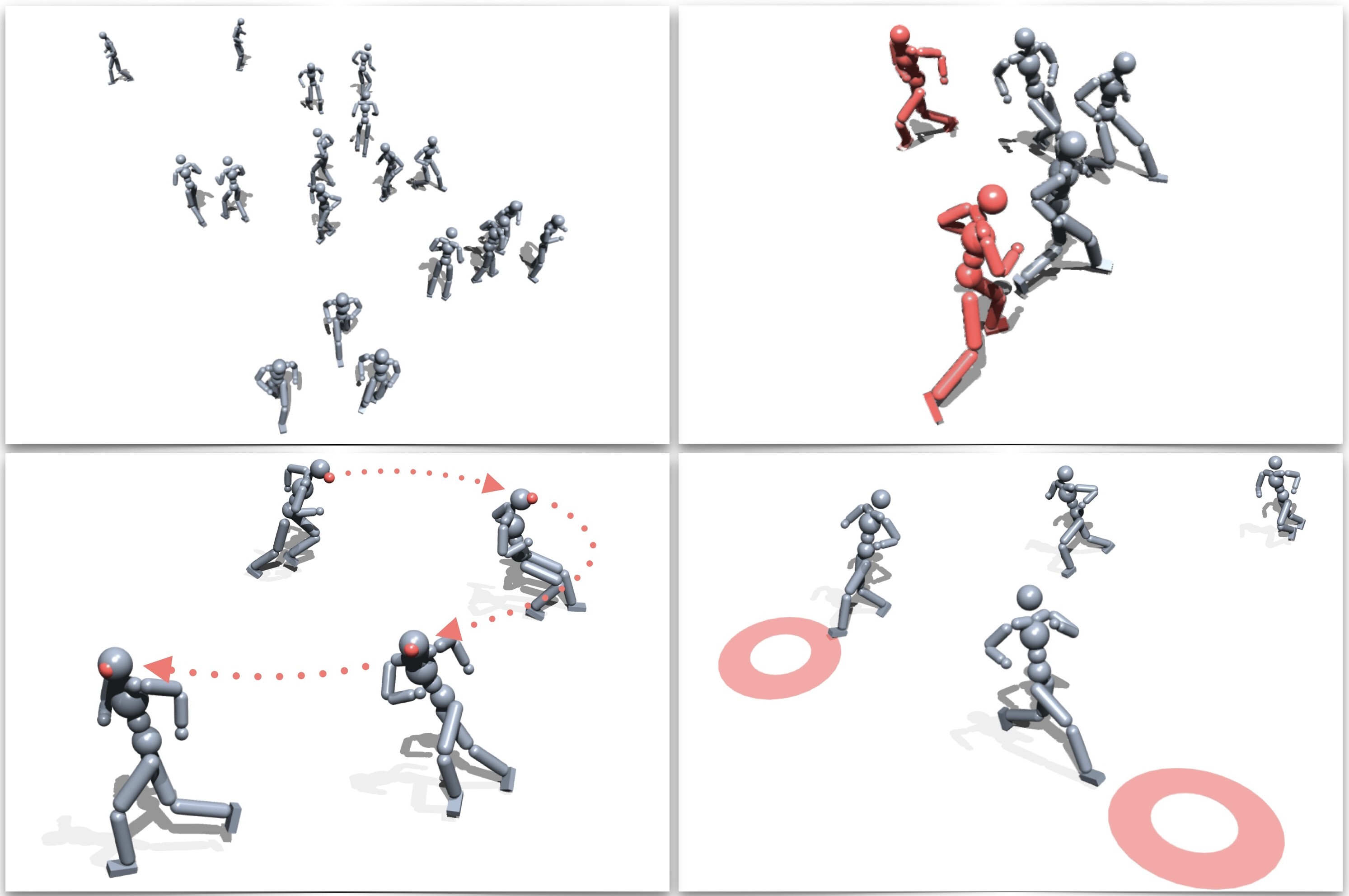

A framework that creates lively virtual dynamic scenes with contextual motions of multiple humans.

CVPR Workshop (HuMoGen), 2025

A motion generation pipeline conditioned on predefined key joint goal positions and a 3D environment.

Eurographics, 2025

Integrates continuous and discrete latent representations so that physically simulated characters can efficiently use motion priors and adapt to diverse challenging control tasks.

CVPR, 2023 Highlight

Automatically creates realistic, part-aware textures for virtual scenes composed of multiple objects.

Eurographics Short, 2023

Processes sparse, noisy point cloud input and generates high-quality stylized output.

WACV, 2023

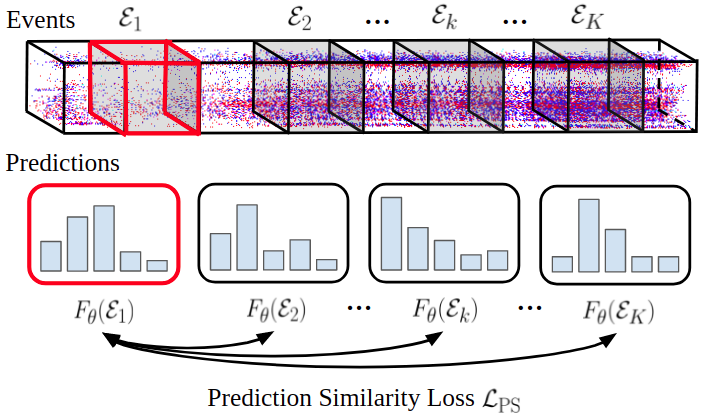

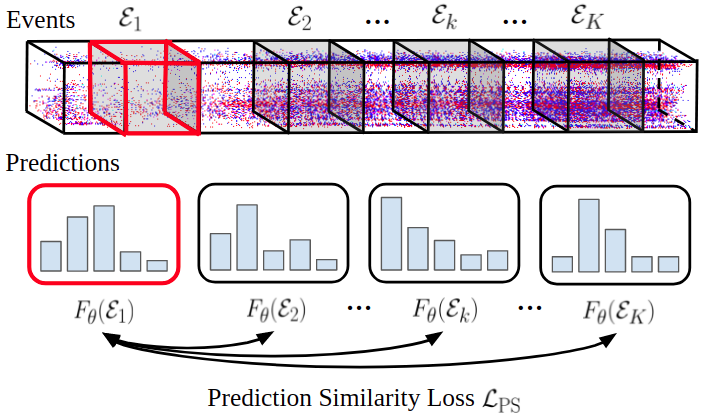

A Neural Radiance Field derived from event data — a basis for various event-based applications and robust to sensor noise.

CVPR, 2022

A simple and effective test-time adaptation algorithm for event-based object recognition that adapts classifiers to various external conditions.

IROS, 2022

A real-world grasping algorithm that generalizes to transparent and opaque objects via masks.

Research Scientist Intern

Mentors: Bing Zhou, Chuan Guo, Jian Wang

Physically plausible reconstruction of human motion and scenes from real-world videos. This work resulted in SceneMI, published at ICCV 2025 as a Highlight.